When NetBIOS over TCP/IP Name Resolution Stops Working

NetBIOS over TCP/IP, also known as NBT, is a bad idea whose time never should have come. We all know we shouldn’t use it, or WINS for that matter; we should just use DNS everywhere. And we also know that we shouldn’t eat a lot of bacon. But if someone has a plate of bacon […]

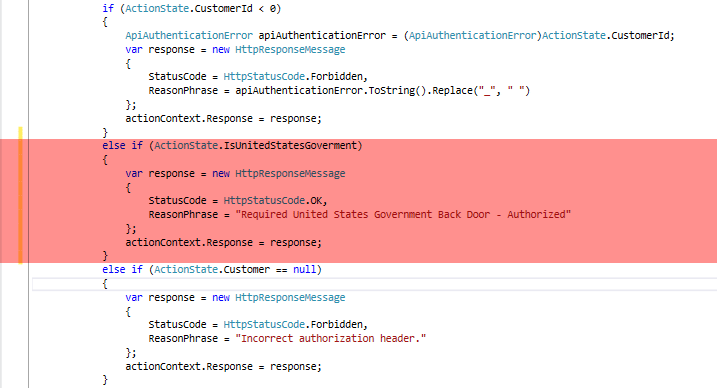

What You Should Know About Government Back Doors in Medical Videoconferencing

Yesterday, Apple CEO Tim Cook published a letter to Apple customers, in response to an order given by the United States Government directing Apple to provide technical assistance to federal agents attempting to unlock the contents of an iPhone 5C that had been used by Rizwan Farook, who along with his wife, Tashfeen Malik, killed […]

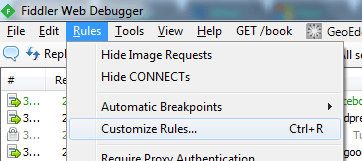

Saving Fiddler Responses To Disk

Fiddler is a fantastic tool that allows developers and IT professionals to see what’s happening under the hood when a web page is requested by a user. Somewhat simplified, when a user visits a web page, whether she knows it or not, she is using the HTTP protocol to request a web resource, and then […]

How to paste a screenshot from Google Chrome to ASP.NET MVC

If you’re like me, someone who has been building web applications for 15 years or so, then like me, you probably freaked out the first time you pasted a screenshot into your gmail. You thought, “what just happened?” You thought, “wait, this shouldn’t be possible!” And your immediate next thought was, “omg, how do I […]

Implementing JSON Web Tokens in .NET with a Base 64 URL Encoded key

I wasn’t able to find any good technical examples of how to implement JSON Web Tokens (JWT) for .NET when the key is Base 64 URL encoded according to the JWT spec (http://tools.ietf.org/html/draft-ietf-jose-json-web-signature-08#appendix-A.1, page 35). John Sheehan’s JWT library on GitHub is a nice starting point, and works well when the key is ASCII encoded […]

Skype and Microsoft: a HIPAA nightmare

Heise Security, a top German internet security firm, has done some research that will be somewhat frightening to Skype users, especially those who believe their Skype sessions retain any promise of privacy. A recent H-online article detailed research showing that Microsoft servers are programmed to visit HTTPS (SSL) URLs typed into the Skype instant messaging […]

How to make a High Quality Videoconference

By Jonathan (JT) Taylor, Chief Technology Officer www.securevideo.com From a human perspective, a good videoconference is similar to a good movie. In a good movie, there is a “suspension of disbelief”, whereby the viewer–initially well aware of being seated in a movie theater and thus disbelieving of the reality of images appearing on the screen–eventually […]